How I Got Sub-200ms Time-to-First-Audio Streaming LLM Responses on iOS

If you’re building an AI-powered voice feature on iOS, the kind where a user asks a question and hears the answer spoken aloud in real time, you’ve probably hit the same wall I did. The LLM streams...

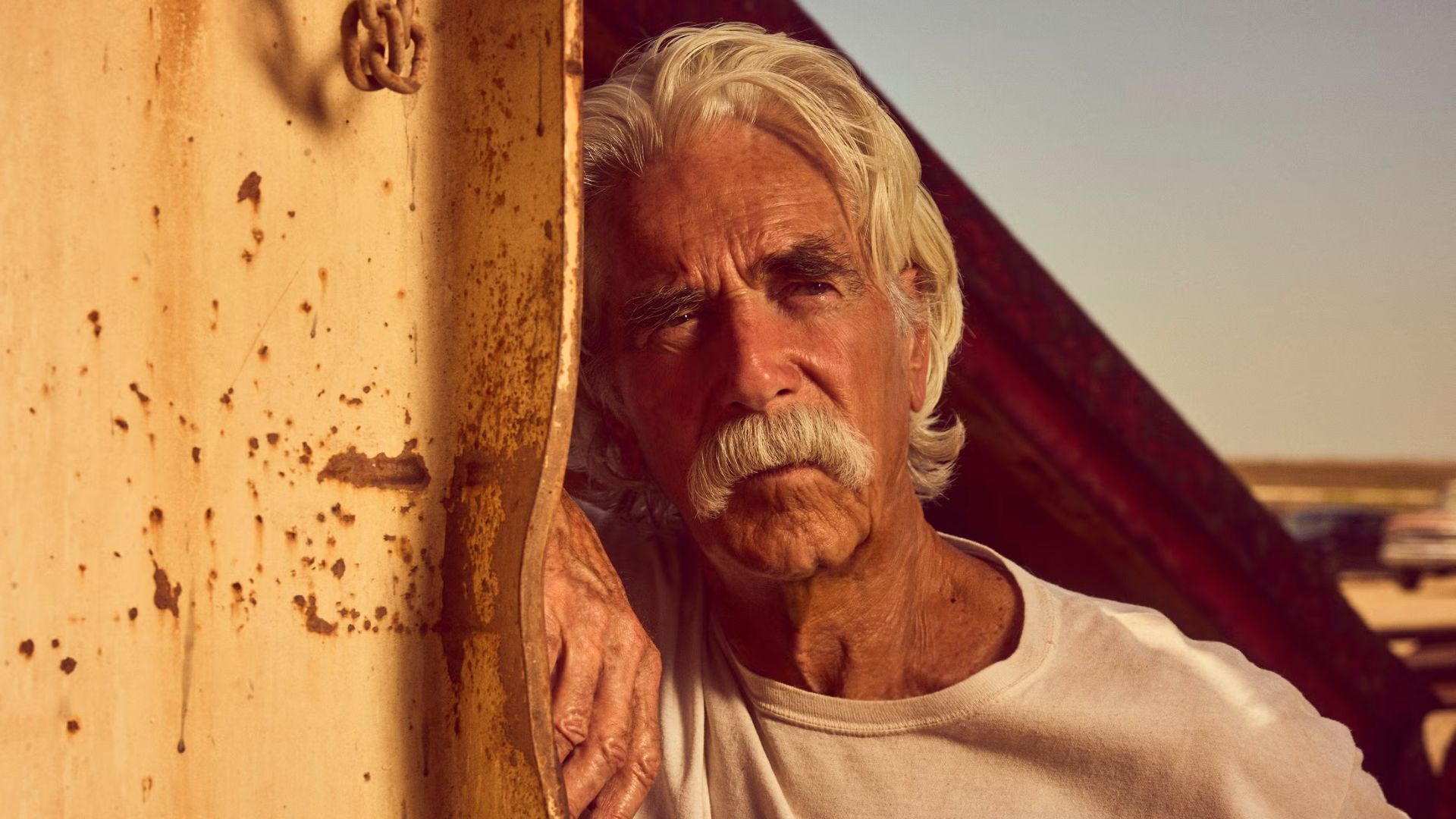

Source: DEV Community

If you’re building an AI-powered voice feature on iOS, the kind where a user asks a question and hears the answer spoken aloud in real time, you’ve probably hit the same wall I did. The LLM streams text back token by token. You need speech output that starts while the text is still arriving. And you need it to feel instant. Not “wait three seconds while I buffer the entire response, synthesize it into one big audio file, then play it back.” This post is about the engineering problem hiding behind that requirement, the three failed approaches I tried, and the architecture that finally got me to sub-200ms time-to-first-audio on a real device. The Setup I was building a conversational iOS app. The flow looks simple on paper: User speaks or types a question App sends it to an LLM API (streaming response) Text chunks arrive over several seconds Each chunk gets sent to a cloud TTS service (ElevenLabs, Google Cloud) Audio comes back and plays through the speaker Steps 1–3 are well-understood.